Vision

The Digital Twin Paradigm

In a world increasingly shaped by the green and digital transitions, many scientific and technological systems are becoming more complex, data-rich and dynamically interconnected. This evolution raises several scientific questions:

- How can complex systems be simulated in real time?

- How can models evolve continuously through data?

- How can uncertainty be quantified and propagated?

- How can large-scale simulations remain sustainable?

The common answer to these questions lies in the paradigm that guides a large part of the Rozza Group’s research activity: digital twins.

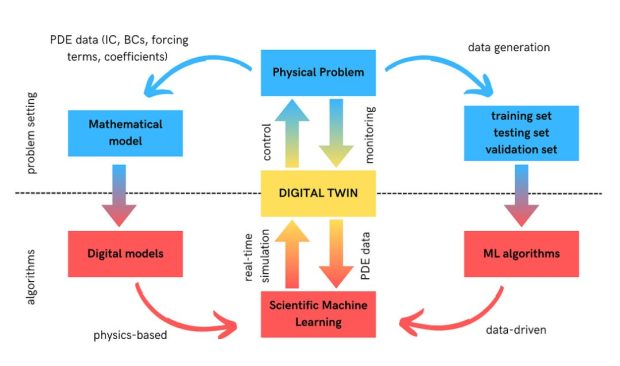

A digital twin can be understood as a virtual representation of a physical system, continuously updated through the integration of data, simulations, and observations. Unlike conventional computational models, digital twins are designed to evolve together with the system they represent, enabling continuous calibration, predictive analysis, and scenario exploration.

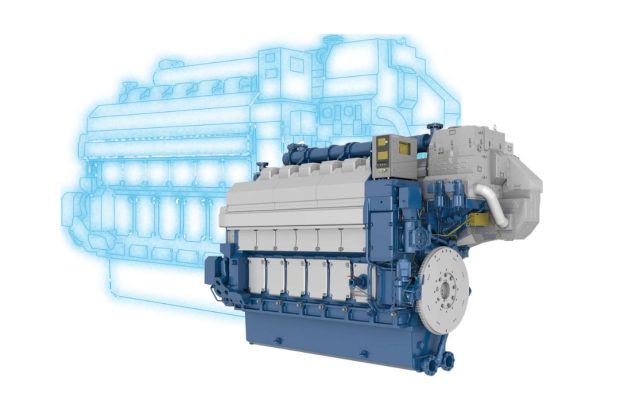

Image by Wartsila

Scientific Challenges of Digital Twins

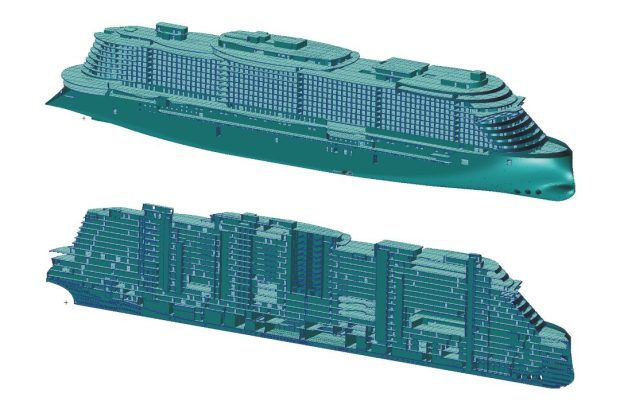

The development of digital twins is a multi-disciplinary challenge involving mathematical modeling, scientific computing, data analysis, and real-time prediction. Building reliable and adaptive digital twins requires addressing several interconnected scientific and computational issues emerging across several application domains, including aerospace, naval engineering, energy, manufacturing, biomedicine, and environmental sciences.

1. Generalization

Digital twins must remain predictive when operating conditions, parameters, or system configurations change over time. Models trained on limited datasets often struggle to extrapolate reliably beyond the scenarios already observed.

2. Data Integration

Digital twins rely on data generated by simulations, experiments, and operational systems, often characterized by different formats, resolutions, and time scales. Such heterogeneous information sources must be integrated into a coherent representation.

3. Scalability

As digital twins become more detailed and data-intensive, the computational cost of simulations, updates, and analyses rapidly increases. Workflows must remain efficient even when involving large-scale multiphysics models or real-time constraints.

4. Fidelity Preservation

Reducing the complexity of a computational model often risks losing relevant features, effects, or dependencies. The challenge is to maintain the essential behaviour of the original system while making repeated evaluations computationally feasible.

5. Uncertainty Propagation

Uncertainty may originate from incomplete data, uncertain parameters, noisy measurements, or modelling assumptions. Understanding how these uncertainties affect predictions is essential for assessing the reliability of a digital twin.

Methodological Pillars of Digital Twins

From a scientific perspective, the development of digital twins requires the integration of different components from various research areas. This makes digital twins not only an application domain, but also a unifying research framework in which several methodological and technological challenges converge.

From Reduced Order Models to Digital Twins

The research vision of the Rozza Group has progressively evolved towards a broader framework for real-time and data-enhanced computational modeling, in which digital twins emerge as the point of convergence of the different research lines developed by the group.

The Foundations

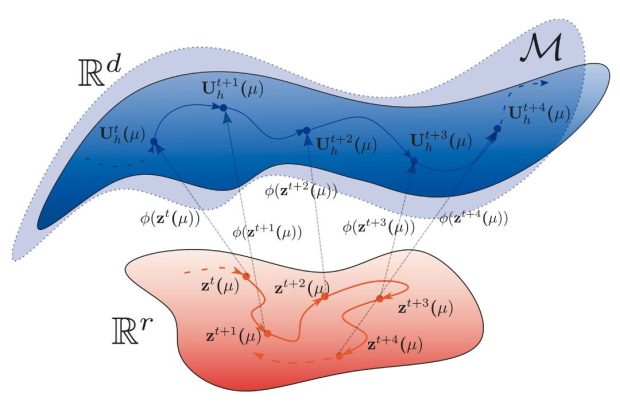

Reduced-order methods for parametrized PDEs

Early research activities were primarily centered on reduced basis methods, projection-based model reduction, and efficient numerical approximation. These methods were initially developed to make high-fidelity simulations computationally affordable in contexts involving repeated queries, optimization and control.

The Expansion

Uncertainty quantification, optimization, industrial-scale simulation

Over time, the research expanded toward uncertainty quantification, stochastic modeling, and Bayesian approaches. In parallel, the group increasingly addressed geometrical parametrization, multiphysics systems, and industrial-scale applications, extending reduced order techniques to more complex and realistic scenarios.

The Convergence

Scientific machine learning, data assimilation, hybrid modeling

More recently, the integration of scientific machine learning and data-driven methods has enhanced reduced-order methods, especially in nonlinear and turbulent regimes. These developments have progressively connected model reduction, uncertainty quantification, high performance computing and data assimilation into a common framework.

Our Alignment with International Research Agendas

Our focus on digital twins is consistent with recent international agendas on these technologies, which emphasize the need for new mathematical, computational, and data-driven methods enabling reliable and adaptive representations of complex systems.